What is RAG & how it process ?

What is a traditional RAG? What is a vector-based RAG? What are the problems associated with vector-based RAG?

And how does this new concept—known as Vectorless RAG (or Page Indexing)—effectively solve a significant problem? Furthermore, how does it utilize reasoning models to enhance document retrieval? So, with that, let's dive into the context.

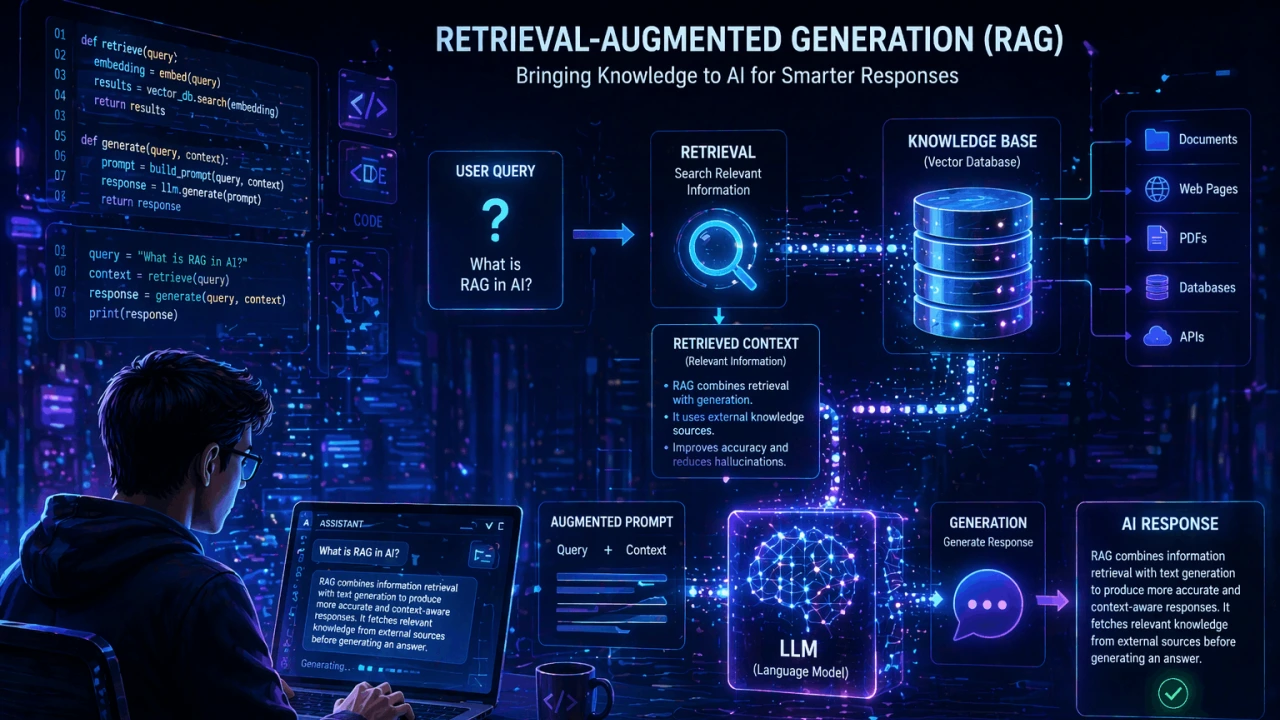

Alright, so before jumping into Vectorless RAG, let's first understand what a traditional RAG is—and even before that, let's define what RAG stands for. RAG basically stands for "Retrieval Augmented Generation." The problem statement here is quite simple. For the moment, let's set Vectorless RAG aside—let's not worry about it just yet. Let's assume that within a traditional application, you have a large collection of documents. For instance, I'll take a PDF file here; let's say this represents one of my PDF files. You might have numerous PDF files, or perhaps a single PDF file containing many pages.

Now, a user wants to perform a Q&A session based on this content using AI. So, essentially, I have these pages—it could be three pages, or it could be three hundred pages—and I need to facilitate a Q&A interaction over them. The simplest, most naive solution would be this: let's consider what the user does. The user will provide you with a query—right? The user is going to give you a specific question (which is also referred to as a "prompt"). So, let's assume this is my query. What you can then do is feed this into an LLM model—you can use any Large Language Model, whether it's GPT, OpenAI, Anthropic, or any other model.

Let's assume this represents my model. Basically, what you can do is simply feed *all* of these documents directly into the model. You take all your documents—all your content—and include it within the prompt itself; you also include the user's query within that same prompt. The LLM can then process this input and generate a corresponding output. This constitutes one simple, "naive" solution—one that is reliable and will certainly work—wherein you provide the entire content of the documents, supply the user's query, trigger the generation process (make an LLM call), and receive your output. But as you can see in the

diagram, these things don't work that simply. There are a lot of problems with this particular approach. The first problem that comes is that number one it's a very large context because these are particular files, they can be very big files, there can be a lot of content in it and there is a problem that LLMs have limited context window okay so limited context window that means that if you put a lot of content in it, then LLM can fail because the context window is limited, you cannot in just 3000 pages, one or two pages, if you put a lot of content in it, then LLM can fail because the context window is limited.

you cannot in just 3000 pages, you can put one or two pages that's completely okay but even if you have 100 pages in your PDF file there is a high probability that your LLM will fail okay let's assume that after one or two years from today, the context window of the LLM will increase and you can even ingest 3000 pages.

The second problem that comes here is the problem of so much context and LLM will start hallucinating here. Because you have a PDF of 3000 pages, if you ingest all of it in LLM because your LLM is now having a lot of context, So the output that will come, Its quality is not going to be good, because you have too much context, There is no focus on mother, You have completely ingested the entire book in LLM, so the focus is not there, So the answers you will get, it can be generic answers, but not a focused input,

so that's one problem, and third problem is the cost because If the user's query is very simple There was also a query which is just for the page number 5 you every 3000 within LLM call If you ingest the pages so obviously you have to pay a lot of cost for the tokens because In case of LLMs everything is a token and tokens are costly So this is not an efficient solution, right, Why should I give 3000 pages just for a user query? because it is increasing my cost and it is even decreasing my quality of the output,

So this was a problem, which is a known problem within LLMs, and that is where the rag comes into the picture, again my friend, I am not talking about vectors or vectorless, We are just understanding the problem statement simply. So what happened here is you have the traditional rag system. So rag system solves this problem How do I ingest the last documents into LLMs? So inside traditional rag what you do is you have two phases number one phase is known as the indexing phase and number two phase is known as the query phase

so what you have to do is first let's talk about indexing phase, one phase nona indexing phase and number two phase nona indexing phase ok so that's the first indexing phase. Talking about phase, what is indexing phase? Ok let's talk about indexing phase, first key user? Will give you some PDF files PDF and can be Excel can be document files can be any kind of file but now for this we Let's assume PDF file only, because that's the like most common PDF format, right, most common format, what you have to do is, number one, you have to chunk these into many many segments,

What happens is that I can chunk it by using a particular algorithm, So simple chunking can be that I chunk it page by page, if I have 3000 pages, what will I do, I will make 3000 chunks of my PDF file, So first what could be your approach, let me chunk you page by page. which works perfectly fine, second, if you want to make the chunk smaller you can do something known as a paragraph by paragraph You can also do paragraph by paragraph chunking.

so basically what you are doing is you are doing some kind of chunking and splitting of the documents okay most commonly what do you do paragraph You can do by paragraph but in that There is also a problem that a paragraph If it is very big then you can still go out of context right the context window can be reached So what do people usually do? what do they take a fixed window chunking okay this is known as a fixed window chunking so i'm here I will take one size let's say 500 characters of so what I can do is

One for every 500 characters I will chunk it, it takes 500 words not characters let's take 500 words so what I am going to do is I will chunk this entire PDF into 500 words. so what I am going to do is Chunks based on 500-500 words If I make it, let's say this is my chunk 1 this is my chunk 2 this is my chunk 3 and so on So the first part was chunking.

and now what you basically do is you these chunks convert inside vectors using a LLM using an LLM okay so here what you can do is let's say if you are using open AI vectors inside so open AI Special models are made to make You don't use simple models here there are actually vector models so let me just show you vector models okay so vector models if we use open AI so you can see vector embeddings and you can see that you have special models here like text embed 3 small is a model text embed 3 large is a model so you use special different

models for these embeddings so what you can basically do is you take these chunks you call your embeddings model right which will call right which can be your open or your entropic whatever you want to use and then what you basically do is you get some array of numbers because what is vectors at the end of the day there are some array of numbers So you will get some array of numbers returned here.

so that means what i did picked up chunks Gave it to my vector embedding model, I got the embeddings here, so that means what I did, picked up the chunks, gave them to my vector embedding model, I got the embeddings here, just in case you want to understand what are these vector embeddings and all, okay, now basically what you can do is, In embeddings, you have to store somewhere in database,

Now you can use these vector embeddings Not saved inside traditional databases can there are special databases for vector embeddings for example you have pinecone okay what is pinecone db a vector database similarly you have chroma db you have viviate you have milwis you have quadrant there are so many databases which are vector databases So you can find many databases among them, which are vector databases, So you can store inside them, Even Postgres also comes with an extension, known as PG vector, which makes it a vector database,

So what do we do with these vectors, Let's save our vector inside the db, along with the chunk, So that means this was its chunk, These were its vector embeddings, So all the chunks you have made, You will save that many vector embeddings in your database. So this was your first part indexing that's it take the PDF file chunk it up make its vectors and save it in the database Your indexing phase is done Now second phase comes user query When the user wants to chat over his PDF file want to ask something about that

so what happens is that your user will come here, so this user wants to chat over his PDF file, wants to ask something about it, so what happens, That is your user, He will come here, so this user came, And what will the user do? Someone will give you a query, He will say, Friend, I have a query. We made the spelling of the query wrong.

This is my query, please me over it, tell me something, Tell me according to my PDF file, what is that, now the thing is, what you do is, first of all, This is the query, using the same model, you will also create vector embeddings of this query, okay, now we do this you do is first of all this query is using the same model You can also use vector embeddings of this query you will make it ok now let's look here We are not using simple llm here.

we are using a vector embedding model so what you are going to do is you are going to convert the user's query also in the vector embeddings using the same model And what will this do to you some number Will give let's say whatever number comes Something came like 3 2, 5 and 6 What will it do by becoming vector embeddings it will give you some number let's say whatever number came it came something like 3, 2, 5 and 6 something like this came as vector embeddings now what you can do is you can search for similar numbers

in your database Don't you remember this was our pine cone database? so what you basically do is you are going to go into the database and you are going to do a vector similarity search vector similarity search okay, so that means you will tell him Friend, I did not get these numbers. 3, 2, 5, 6 by searching this Bring it, then see it is possible that this particular which is the vector point If he brings it to him, what you can basically do is you can get this chunk, because user The question asked, let's say,

The user said something about the car, the question was asked, And wherever, Inside our PDF, about that car, Must have talked, inside vector embeddings, So you, Relevant chunks will be found, You pass another parameter here, which we call, top underscore k, how many relevant chunks do I need, So I said, This is not top k, make mine 5, Bring me the top 5 relevant chunks, not the entire PDF file, just the chunks, and what is the size of each chunk we had decided, maybe 500 words, maybe you have made one paragraph a chunk, then what will you get here, top 5, 1, 2, 3, 4 and 5 chunks have been found, PDF file could be yours 3000.

pages, but here you What did you do by using the user's query? smartly only relevant chunks Found it, it is called relevant relevant meaning in every chunk The discussion is taking place as per the query of that user. now what you can do is, you can take these chunks, plus The user who originally asked the query said these two things: then you can do a simple open API call which LLM is called this can be a GPT 4.

model, cloud, anthropic whatever you want you can call it man The user has asked this query and now it has relevant chunks. Above you perform one generation you will get some result which you can return back to the user. And this is how your traditional rag system works. Got it? You use vectors in this. Okay. Now let's understand the problem behind this vector rag. It is called vector rag.

Right? Vector rag works fine. It is used a lot, almost every company is using it, and it's a very, like you know, a very traditional and a very old way to do the document raga, But what is the biggest problem in this, the problem is chunking, Because we don't have any solid justification for chunking, okay, see, I'll tell you one thing, let's say you have a paragraph, You have this second paragraph, you have this You have this third paragraph, you have this fourth paragraph, what did you do, blindly an algorithm

I have decided what I will do, I will not chunk above 500 words, Okay, so what would you have done, let's say if this is your entire page, You split it into 500 words, That friend, pick up the first 500 words here, then pick up the next 500, pick up the next 500, then pick up the next 500, then this is a whole page of yours, you have split it into 500 words, that friend, pick up the first 500 words from here, Next 500 then pick it up, Next 500 then pick it up, Next 500 then pick it up, the thing is that,

It is possible, Some information should be in this chunk, And some information should be in this chunk, but because you split it in the middle, your context is lost, your data is lost, right, Let's say, I open any random paragraph in front of you here, your context is lost, your data is lost right, if let's say, I am here Any one random in front of you I'll open the paragraph, okay, by the way There is a paragraph of vectorless melody, for Abe assume, this is a story book, I what did i do in the first chunk

Just kept the data till here, maybe My 500 characters, not just here It's done, what will happen now? you can see clearly, me technically This should also have been taken, only then there would be a complete story frame, but you took a number of 500 characters, the first chunk became just this much, and the second chunk became this much, so what happened, because you were doing chunking on a static number, your context remained in one chunk, and the rest of the context went to the next chunk, so that paragraph could not be completed, that's a one problem, second problem that.

comes is, see, ho could that this whole three paragraphs make one story right, it never happens that this Paragraph's own story and this paragraph's own story is, maybe that this alone makes one story, but If you have paragraph by paragraph chunking If done, then this is one, this is two and this is three and secondly what happened here, third paragraph is very hurtful, second paragraph is very long, So whatever chunking you did, There is no justification behind it, We're chunking blindly, so this is one problem,

How do I do that chunking, Meaning I should make relevant chunks, I should create chunks semantically, not some hard-coded way, that I'll take paragraph by paragraph, or let's take 500 words, not some hard-coded way, That I will take paragraph by paragraph, Or I will take 500 words, this is not a good way to chunk the data, A strike off within context, a cut will come, second problem is, If you would have seen, like for example, If you have seen any legal documents, legal documents, So what happens in legal documents,

usually no, inside it, There are references, There are references inside it, that as per per rule let's say he said it or as per rule append let's say 63.7.4 of of a something like that, okay then He said something before that, now the thing is, you clearly see that, Here is a reference to another page, let's say what was yours, this was yours on page number 4, And then you can have, There can be a page number inside the same PDF, 578, In which this rule is actually mentioned, That's what happens, isn't it? usually this rule is mentioned inside it,

So what will happen now? you can clearly see that you want to read both of the pages, because there is a rule mentioned inside it, so what will happen now? because there is a reference inside this of the page and the actual content inside this page So it is for this generation I want both these pages But this does not happen in chunking.

What will happen in vector embeddings? What is this keyword being used here? will do this will pick this up this may happen that Don't raise it so that's also one problem of the chunking okay third problem which will take in vector rag may not take it so that's also one problem of the chunking third problem which is in vector rag it comes and sees when you hit chunks When you over chunks perform vector similarity search Based on these numbers Your vector similarity search is performed now these numbers rely heavily on what

kind of question user is asking If the user gets the same keywords in his query, he says, look, user, anything. You do not have control over the user, if the prompt I have written Wrote my llm very well i mean i did not write the exact same If I used keywords which were inside my pdf file then its vector embeddings and were inside my pine cone then its vector embeddings and inside my pine cone The vector embeddings stored are very Will match easily and I like it very much You will get good relevant chunks

but it doesn't happen every time Maybe your book which we I ingested the terms that were inside it. The butt user is very different. Asks only vague or very high level questions question right how to do this It is possible that the chunks that are created, the vector embedding that is created, may never match with that original documentation, because the user does not know how to ask, the user does not know what his keyword was inside the original book, right, what was the keyword, what should I really ask, so here we rely on the user's query that the user's query will be good.

whose vector embeddings are That's from our original document vector embeddings only if they match What is vector similarity search? will return relevant documents So if the user's query is useless We will not find relevant documents and our llm output will not be good these are some of the problems Which comes inside the traditional rack, and these problems are now solved, now kind of solved using vectorless rag, okay, so vectorless rag as the name says, inside it you do not do vector embeddings at all, okay, in this also you have two phases, number one is the indexing phase, number two is the query phase, phases are exactly the same, but the indexing phase has changed here.

no vectors there is no pine cone there is no vector embeddings there is nothing there is no chunking even right so what do you do you use reasoning model okay because look what is overtime, your llms have become more smart. they are more capable they are more smarter more reasoning so you heavily rely on the reasoning models, they are more capable they are more smarter, can do more reasoning, so you heavily rely on the reasoning models, then do one thing These documents read, so this is one article, which I want to show you

and inside this is an example of Sholay movie ok, so this page index is called by the way, the vectorless Another name of raga, that is a page index, how to build a vectorless Raga, i.e. there is no vector embeddings, no vector DB, If you read this document a little, If we start, you can clearly see, Page Index is a vectorless, reasoning based, retrieval augmented generation, rag, okay, it's a vectorless, and what does it do, If we go here, instead of relying on semantic similarity search, What I was telling you was vector search, right?

semantic similarity search, Page Index builds a hierarchical table of content tree, here your data searches will be very useful, this is a very important line, so this is a very important line that is hierarchical table of content, okay, let's note this, because this is the indexing phase, so inside the indexing phase you are not making vector embeddings here right you are not doing any kind of chunking or vector embedding.

but what you basically do is you build something known as a TOC tree which is basically a tree, you must have read in data structures that it is a tree, right? What does a tree look like, your tree looks something like this you have something here, you have something here, you have something here Then you have some nodes here right you have multiple nodes, these are called nodes and then you are basically join these nodes so there are some such nodes right you have multiple nodes these are called nodes and then you are basically join these nodes so there are some such nodes right

so this is what a tree looks like so you build a table of content like you buy any book you have an index and if you have to read something what you do you open the index you see what is the line there and that's how you basically do it correct so this is something we have to build but here's the end and that's how you basically do it correctly Let's take So if we go back here Suvishwa basically create this from document use is large Language Model to Reason Over Its Structure Here Reasoning is Used The Model First

identifies the most relevant sections using the documents hierarchical hierarchy, the tree, then navigate to the section to generate precise answer. Ok? So that means, if we talk about the whole thing, Traditional raga worked on similarity, What does page index do? Does reasoning. This bit is inspired by that human, because if you ever notice, if I give you a very big book, I will give it to you in a very thick book, and I ask you a question, how will your brain perform? So that is actually something like page index. So what does page index basically do, by the way, before going on, so it also solves the problem of legal documents and legal contracts that I told you about, okay?

So what does page index do, number one, if we go down a little bit here structure before search okay so what you are going to do is you are going to build a hierarchical index so this is basically your entire pipeline document Will get you are going to create an hierarchical index of it then you reason on it based retrieval and then you will get an answer instead of doing this This is your vector embedding Okay, so what will we do first? First of all, we are going to build an index something like this, see if you have

Sholay movie, I am not sure if you saw it Is it or not, if you have sholay movie the book is from sholay movie What can you do, you can ask the llm to go page by page and create an index of it So how will an index be made of it, you will have a root document which is null, let's say inside that you just put a summary That does the entire Sholay movie? then what will you do you inside it Will you identify the scenarios? the scenarios is the right word If we go here too there is something known as scene headings

what's inside this movie What were the plots, what were the scenarios, LLM does it itself, reasoning models can do it okay, so what were the plots inside, what were the scenarios, LLM does it itself, reasoning models can do it, okay, so what did he do in life in Ramgad, Gabbars resign, right, final shutdown, after that Gabbars then, after that then let's see your bass recruitment of Jiro, Vero and Jai, so what did you do, which were the main headings, which There were main scenarios, where some plot twist happens, where the story changes,

Where a story is complete, what you did, one of You have created a table of content. Made its headings, content It's not here, it's just headings, okay, If you have created headings, then in headings See what can be created, what will be structural detection, Made scenes, made characters, made after breaks, major where If there was some transition, you made that, then there is no problem in it, major.

Where there was some transition, then you made that, So there is no fixed chunk size in this, Well, there is no fixed chunk size, What is there in this, based on reasoning you Identified the things, what are the different things, Meaning a new character was introduced, You posted it, maybe somewhere. There was a big twist in a movie, You took it as a detection, then maybe somewhere you have put that ending, maybe somewhere there is a big twist in the movie, you have given it a Edge detection was taken and then maybe there was an ending somewhere and there was an emotional scene there.

If you have taken that then you have detected things where things are changing. Okay and best on that. You don't have to do this, okay, now, then what you can do is, you can give some tags, for example, you turned blue, where If there are segments of a story, then from this In all the blue places, there is this There are segments of the story, then which ones did you read? Marked wherever there is something related to Gabar, You marked purple, Wherever there are critical events, and gold Marked, where there are any events,

again, LLMs can do it better, So you kept giving the documents to LLM, got him to do reasoning, on the basis of reasoning you got a tree generated where there are any events, again LLM's can do it better, so you kept giving documents to LLM, got him to do the reasoning. Got reasoning done, on the basis of reasoning You have generated a tree and a hierarchical mapping of yours It has become, that brother is my root node.

Sholay, after that you are making me watch it again I have all these first level branches ok, what happened inside him after that So based on that you have built a tree out of it, now every node What data can we store inside ok look at it this is a node this is also a node this is also a node So what data will we store inside each node? number one title title of that node id of that node This ID is very important this id is ok This is your node ID here This is basically a reference to the original document. Look here, we are just keeping it in a tree format. This is your node id. Here this is basically a reference to the original document.

Look, here we only see him are kept in a tree format but actual page number That in the official documentation where to get that thing node id Then kept a summary of it and its child nodes We have kept here this is how you do a tree in memory correct now if we are here Let's go so basically what will you do? whenever user Someone will ask a query, let's say You asked, why did Thakur lose his arms, this was our query, So what can you do here, you don't have to travel, you don't have to give the full movie, to you the full movie

So you don't have to pay for LLM, do you? You can do it, the full script is not sent, ok, because I need my context Not increase the size, no there will be no embeddings, there will be no embeddings no There will be no similarity size. What will you do by using the user's question? He will traversal your tree.

traversal birth will do the first traversal and what it will do is it will pick up the relevant nodes. Are not working on the original document, Right now we only have table of content, The tree we have just created, small tree, We will just work on it, So llm will be called, Friend, the user has not asked a question.

Why did Thakur lose his arms? So friend please do one thing for me, This is my tree, Don't search for it from this, what do you think, Which nodes are relevant? And bring its child nodes, So what will he do? On user's question, He will go to the hierarchical map, and will read the summary of each one, what will he do, based on the structure, he will pick whichever node he finds relevant, okay, because you have a very good tree, so what he did, he picked this node, he picked this node, and he picked this other node, because your tree was in a very good structure,

He picked this node and he picked that node because your tree was in a very good structure now what it can do is When you have relevant nodes you have found from here and there now you have node id You can also fetch relevant documents You can also fetch original documents plus because there was a summary here, you can use that also.

So only LLM's reasoning is used here. what nodes do i need and after that you can just give that data and you can do the retrieval, okay so that means if i'm here for a second Go back if user Asked something, it was a user's query so what you can do is, first of all Maybe this node is relevant for me OK, this is node relevant, but this node is not relevant Leave all this aside if it is not relevant.

Leave it all, it is relevant, so I picked it up, I did this pickup, so according to the user's query, I got a subset of tree, which is relevant for me, then what I can do is, inside each node there is a summary, meaning how will LLM decide which node is relevant for it, we had kept the summary, now basically what I can do is I can go to the original document.

I can fetch original chunks I can give that to llm and then I can do the retrieval, so that means What can you put inside each node First keep the node id, which is a unique id Then this is basically a location Maybe, there is a pointer pointer to original page, you can put something like this whatever you want to keep, after that you keep the title of every node, keep the description of every node, you can keep every node, whatever you want to keep, After that keep the title of each note, Keep the description of every note,

Keep a summary of every note, and obviously his child notes will come, which is basically again an array of notes, so this is basically how you can construct a tree, What happens to you in this, Look, LLM itself decides everything. what should I do, right, what I want to do, so that means, no vectors, no vector embeddings, no chunking, there is no semantic search. It purely happens on the reasoning and the capabilities of LLM.

So this is basically what page index is basically trying to do. So page index basically works on navigation and extraction. This mirrors how humans read.

When you want to know something the index basically works on navigation and extraction, this is the belief that in the same way so this is the main thing that basically that basically what happens here okay so if you go here in vectorless rag you see this one repository also which are introducing this thing this is a page index again not sponsored okay so this is basically a python sdk i feel yes this is in python which enables you page index so you can see what it happens you give it something you it builds up a tree then it does an LLM reasoning on the query and you get an answer, so this is the whole pipeline, this is a very relatively very new thing or just in case you want to see what a tree looks like

this is what a tree looks like, so you have a title you have a node id, you have a summary, you have child nodes Then after that title, node id, start index end index, where is it originally and a summary and inside that node id, start index, end index where is it originally and a summary and there can be child nodes inside it too so you construct a tree so here's your because LLMs have got smart over time So the reasoning models of LLM reasoning models and the smartness of the LLMs is basically used here

so that is how the vectorless rag comes into the picture So this is what is basically used here in this particular. So that is how the vectorless rag comes into the picture. So this was in this particular that how vectorless rags are coming into the picture, how vectorless rags are coming. So just in case you like this approach, let me know, I am even ready to code.

Recently in our very recent project, we have converted our traditional rag to a vectorless rag. The only trade-off that we have to give is number one the cost because reasoning models are expensive and the speed. Because you have to reason something and you have to do a tree traversal, it takes a little bit of time for the LLM to come to the final output.

because before that it reasons a lot. So the trade-off is that we are trading off time for accuracy. Okay, so that's the trade-off that we have to give. Rest, there are many relatively new things. Of course, it's an AI world. Things change very rapidly. new new things keep coming. So let's wait for the next thing what comes here.

But let me know in the comments that what do you feel about this Victorless lag? What are your takes on it?

Elon Musk on the Future of Technology: AI, SpaceX & Innovation Trends

Elon Musk - The Transition of Technology

Elon Musk has activated his gourd mode. Hala was active since childhood. But now he has completely owned her it's a very basic thing And he has to literally go on the mars. The way he is doing it now, isn't it? That's like his terra fab project he launched it brother he has a thousand ways If anyone thinks that Billionaire is a man and a man with money He wants to be a rich person, always wants to be above the competition, so he is not there, why not, because the people who are billionaires have competition with him, he can easily beat them.

He can do this by using such normal conventional business which will keep increasing his money. Okay, what happens these days? Hey man, these are very big billionaires like Bill Gates, what they do is interpose a problem, find a solution to it, and earn money from it, fooling humans so far is a scene of capitalism, What Elon Musk is thinking has nothing to do with your problem brother, that man is trying to earn money by taking human civilization to the top.

And he wants to earn money because he literally wants to reach Mars, sounds funny, but let's start here, Like myself, okay, I am a good science student, okay, I am studying a lot, I am fine in my mind, I am a good science student, okay, I have done a lot of work, I just had one thought in my mind, not just now, but two or three years ago, that brother, this man has to go on the march, okay, one of our Neptune planets is okay, if Varun, if we are able to reach there, then brother, it will take 30 years.

It seems that at the same speed with which we send Mars on March, it takes 30 years to reach there and it will take 30 years to come back. Is there any sense in spending years like this, after that you grow old and die? What would you do if you go to Mars or some other planet? Like Elon Musk is that talks about interplanetary travels that between planet and planet we We have to travel if we want to survive Because what is meaningful will not survive forever.

The person who is not thinking of going to Mars like me, the only thing that will come to his mind as per his knowledge of science is that time itself is a mere hindrance, what is the point of going? But you listen to how things work according to this guy's mind. Now he is so intelligent that he is like other scientists, isn't he? Those who have given theories or proved something His words also uses his research So, what I am telling you has been said many times by Elon Musk directly and many times it has been said by many other scientists which gets mixed in the theories of Elon Musk. Now listen to the timely concept. This is what Elon Musk has to say. Elon Musk, Elon Musk, Elon Musk, say anything, brother, don't mind more than that. I don't pay much attention to the pronunciation. Okay, Elon Musk believes that brother, think of one thing, you have it. The food is fine if you keep it in the air, be it a random apple or some other fruit, if you eat it then it will remain stale for a day or two.

Before it gets ready, eat it, keep it in the fridge, it will last for four-five days, it will last for a week, cold store it. Food items are made special and last for years, so why is it so that their by-products are logical? It is the age, that is, their by-logical time is slower than the absolute time, the food is kept in the cold store, this is because we have found some things which do not rot, Is it like fruits? Meaning their biological time, Whatever is the absolute time, it is going on as it is.

But their own biological time, understanding them, Meaning of an apple is to understand, That it's my time, He has slowed down, The rest of the world is going on like this, And that's why I like you, my age. She will grow slowly, Therefore he can survive for a long time, Now the thing is that Ilan Musk The belief is that by the womb you can increase the biological age of fruits like this, so why not increase that of humans? You will say, brother, there is no use in freezing youmans because there is no use in freezing youmans.

That would be his brand, he would not be able to breathe, this game would be over, there would be no use, the thing is that Ilan Musk believes that there are trillions of cells inside our body which run our body and those trillions of cells. Sales aren't they, they are aging at the same time, aging at the same time means the one with one hand has turned sixty years old.

Turns 30, it doesn't happen that everyone has trillions of cells in their entire body, which is their age together. What does this mean that they receive information directly from brain to brain and our center is the learner and trillions of synapses? Information is received that brother, he is smoking cigarette.

Its age increases faster by doing this exercise. or reduce its lifespan a little faster And Elon Musk believes that the central nervous system Ga or our brain unconscious conscious whatever brain is Which part of that brain is like that? Who is sending this information and if we Have you understood which part is that information? If we are not able to stop it completely then we can manipulate it. Let me explain. It is sending the information and if we understand which part is sending the information then we can manipulate it. Even if we cannot stop it completely then how can we manipulate it. Let me explain.

Just like you switch on the TV with the TV remote, it's okay, so it's not like you called someone. What did you do by plugging in the TV? Did you send some photos from the TV remote, which reached the TV? The photos, yes, those photons carried information, what was the information, the information was turn off the TV, or open it, okay, we used those photons for our benefit, the photons which are already in nature, we manipulated them, we did not destroy them, we did not try to control them, that is, we did not try to do extreme control,

So this means that what is a journey of a hundred years for someone can become a journey of just a few seconds. This means that if you It's a bit dramatic now, you may wonder what he is saying but there should be creative freedom in this In these feelings because anything has been proved yet, it will happen in the future if people like Ilan Musk do it, it means That your life is going on normally, it is going on, you have to go to Mars or Neptune, it will take 30 years, you slow down your biological clock where it takes 30 years but for you it takes 5 seconds, a little mind blowing.

Have you ever thought about one thing at the right level? That brother Elon Musk so many Even after owning companies That man has so much time that he can sit comfortably Where are you tweeting from on Twitter? And also trying to poke others After doing so much, so much After creating companies, he has so much time because he manages his business very neatly and efficiently.

Be it a massive empire of wealth like Elon Musk or a small startup being started by an individual. Just having a great idea or a great product does not determine the success of that business. Execution matters more than your product or idea, day to day operations, invoicing, accounting, recruitment, how to keep your customers happy Execution matters more than the idea Day to day operations invoicing accounting Recruitment How to keep your customers happy and how can this be done in the best possible way?

Some are doing it under the same platform and add add exactly where Odoo comes from Odoo like 4545 beautifully. Design provides you with apps that can meet every need of your business, from generating your invoices to From your marketing to running your website, managing your books, your sales, your inventory.

Whatever is available should be updated instantly and the purchase history of your customer will be available in a timely manner. For so many things, you do not need dozens of applications or softwares, you need a platform like Odoo where you can get all the services at a simple and affordable subscription.

All facilities should be available And one of their features is that their first app It is 100% free for life So take your business digital run smoothly Link is on description and print comment Sign up and experience Odoo once. Well let's come back to our topic Now at the same time, listen further, now when I hear this, the thought immediately comes to my mind that everything which is becoming strong in science, how is it found in Hindu epics, I told Narad Rishi, there is a similar incident with Lord Vishnu, in which Narad Rishi, who is standing with Lord Vishnu, Lord Vishnu says, give me a glass of water, then Narad Rishi, who is there, goes to the river to get a glass of water. We go near him and bring him back, we fill him only when he

While filling that glass with water in the river, he meets a lady there with whom he falls in love. They get married, have children and after having children, they have a very good and rich life. The roads pass and then a dirty flood comes and the entire family in it falls into the flood and suddenly after a few seconds the entire incident takes place.

After this he is standing in front of Lord Vishnu again with a glass of water meaning from the river and the glass of water is Vishnu. He lived decades of life till he gave it to God, now it's okay, you will find it traumatic, okay brother, this So it is okay if it is a traumatic thing, but how does everything get messed up exactly? From the future science and the science that scientists are thinking, I am not saying that whatever Hindu philosophy is, it is absolutely 100% correct.

Is it true? No, I believe that Hindu philosophy The people who were Rishi Muri in the first There were souls who meditated things like meditation and yoga gifts You have been with them for thousands of years, haven't you? You are using later material You are using assets, whatever they have, isn't it? It was next level and they knew something like this We don't know if we were Elon Musk Coming back to the topic of Elon Musk, he launched a TerraFab project. Well, it was just Towal and he knew something like this, so we don't know. Well, we come back to the topic of Elon Musk.

So he launched a Terra Fab project by Elon Musk. Well, we haven't talked about Terra Fab yet, I just told you the concept of time. The concept of Terra Fab is this. Well, before starting Terra Fab, let me tell you one thing. The mind of Elon Musk is so fast that that man knows very well that I need money by the end of March and I will get that money from this world, so I have to keep the business here very real, like what I told you, the time scene, the time scene means.

This is that there are some things inside your brain that send information to your cells and your cells which are They perform on the same basis, what is the man doing in the form of his company named Neuralink? This is exactly what he is doing. He is trying to read the brain activity of people who cannot speak or hear.

is doing so that they can know that their brain activity means a signal from the brain He is coming but he is not able to speak Something or the other between their cells and brain The link is breaking already the neural link was an achievement in 2026 Did it in which he was like a human being Above they measured his brain activity After reading, I was probably an AI.

Started calling in the voice of the one who is thinking in the brain That guy is not speaking in his own voice brain activity is being read and spoken Now imagine that if he were to do this Obviously he became capable It's a business worth millions of billions of dollars. Brother, this business will become his purpose.

Even if everything is about going to Mars or If you want to understand the world, you can create a business. Then on the other side Darorati and KK Create gets angry like that brother, I don't think he is intelligent, he is stuck in the podcast. You will listen to his podcasts and you will get to know from the way he talks, how much knowledge he has about things. Look at his entitlement, man, he is thinking that he is Modi and Owl Gandhi, it is true brother, you are thinking that he is a politician, you should criticize him.

Let's clarify their policies. You are discussing this policy with me, brother, what are your entitlements? If you are sitting with this then you can judge this also. Leave all these things aside, tell everyone what is the scene of the fam. That the coming age is not the age of addiction but the biggest problem going on today is the age of addiction.

They are running with data centers that they do not have a lot of water, they need a lot of electricity for cooling and there are many places where there are data centers and the villagers do not have water. As for their groundwater, whether the water is exhausted or very dirty water is coming in, such electricity is not available.

If there is a shortage then it is a big problem, if I go to the next level then this guy Now what did SpaceX do? Only after this did it create a plan that this millions of satellites, one million satellites, this one billion satellite man, one billion satellites and along with them, he will launch billions of chips, billion satellites and will prepare a supercomputer in space which will do fifty times more computing than all the advanced AI in the world combined, meaning it is so powerful that it will consume as much electricity as the USA. It generates 0.5 tera votes and gives one tera bot vote of electricity to that supercomputer. It generates 0.5 tera votes and gives one tera bot vote of electricity to that supercomputer.

What is needed means that the US does not generate as much electricity as it needs for the supercomputer. Why is it taking the entire scene to space? The entire chips and infrastructure of the world. Because in space you do not have the problem of cooling. In space you do not have the problem of cooling. You do not spend a lot of money. It is an extremely cold environment. There is little atmosphere there. Secondly, in this space you do not even need electricity, because it is solar powered. All the satellites, and the higher you go, the higher you go. No, you can collect solar power much faster because there is no hindrance, there are no clouds, there is no environment, so this man is giving a solution on a platter to the US, to the US Government, that in Taiwan, you face a lot of problems, not that the chips come from Taiwan, they go somewhere else and get polished, their mistakes are corrected somewhere else, I am giving all this to you, all the chips in the US, I I will make it, you just invest, that is, he is also creating a business worth billions of dollars, but for vote purpose, the chips that the man is talking about sending into space are Optimus's chips. Optimus means his robot.

To go to Mars you will need a robot, humans cannot go and prepare everything there first. The robot will have to go, he will go there and prepare everything to live on Mars. Ed Same time is believed by some people to be very high on Mars.

If you want to even think of living one percent then you will have to dig the land of Mars and then Ilan Musk is a little dramatic. Who will do the work for you after going inside the underground? Elon Musk Never Said The Boring Company Although Elon Musk Never Said The Boring Company Make it for him in the company, but the President of Spacex has said many times that a boring company can be useful. If we reach there, then there should not be any doubt in your mind that by making such a big supercomputer, what are you getting from it, okay, you are on the ground with it. Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Advanced Earn money on Adv, he could have done it from any other business also, why does he need to go to the next level and get more money? Love is already on the top, he doesn't need to take such a big risk to defend someone else, then why is he taking such a risk? Actually, he wants to make such a big supercomputer. Here you understand, these five minutes are going to be the most interesting five minutes for you. I had explained in one of my previous videos that Elon Musk believes that this world is a simulation and not real. Now how does he do it?

I will give you a small example of this, if you want to watch the entire video then you can give it, he says this That is, if you assume that the photo is okay, you used to take photos on the old phones, then if you If you zoom too much then you will see small squares. Those squares are called pixels.

Exactly pixels means that the photo is not a photo, they are small squares in which each square has its own data, that brother This square is shown as red, this square is shown as yellow Show, why because he has a green bag in his hand, this The square looked green, thousands like this or lakhs of square bags, squares From this the bag that is there will start becoming visible. So these are the small pixels were designed so that you Something can be displayed on the screen, right Whatever is in front of that screen is the world of the phone, the world of the phone means that PUBG can be there in it, something can be displayed on the screen, right in front of that screen is the world of the phone.

It is the world of phone, meaning there can be PUBG in it, there can be anything in it, but it is inside the pixel and it You can understand that if you zoom out a photo to the smallest size, it will be converted into pixels. In such millions of pixels and that photo is the same video, it means that when you put 60, 70, 80 photos in a second and change them suddenly, then it is difficult that that video.

This is the process of making a video. For combination of photos, the video itself is the meaning of saying. Everything that's beyond that pixel, the entire screen, the entire contribution, everything that's beyond that pixel. It is beyond this and it is a very busy thing that if you have prepared the code for the phone, then you can see inside those pixels, but whatever is inside the pixels, the character who blooms in PUBG, even if he is a robot, he still cannot see outside because he has made the code, which means that he has grown inside our phone, we have not even powered it with Adia. Right now, he does not have his own conscious intelligence, everything is very automatic, he believes that We used that part of our phone

A simulation has been created, meaning the pixels that we have created across the pixels of our phone's screen. Have created simulations, there are no conscious things in that simulation, everything in it is non-living things. Which is in a simulation, meaning here also you see, there are non-living things in the world apart from us.

When AI comes tomorrow, there may probably be AI and consciousness inside the screen of that phone. This means that whatever those pixels are, there is neither a pixel nor a world beyond them. On this side is our world and that pixel is our boundary. Okay, this is the boundary of both our worlds. We cannot see the boundary that we can see, we cannot go inside that boundary, outside that boundary that Cannot come and it does not matter whether anyone after sees that pointer or not, we will enter a little screen, now Elon Musk believes that the pixels of our world

That is space and time distance and time The way the phone If a photo becomes small pixels The last one should be zoomed a lot. When you reach pixel, you can If you try to zoom it won't happen why why why Because we did not create that code, we created smaller pixels than it. Not only but if inside screen If someone inside will try to zoom those pixels, he will not understand why there is no smaller pixel than this, there should be, in the same way we do not understand that if you divide the time into the smallest, microsecond, millisecond, then what is the smallest time, what is this smallest distance, meaning like it is my finger, if I divide it too then it will be divided into small pieces, what is smaller than that, what is smaller than that, what is smaller than that, Our mind just becomes that we do not know what is the smallest thing. Elon Musk and many other scientists believe that the smallest thing exists, it is just not in the control of us humans.

And for that we need a supercomputer capable of doing very heavy computation, which we do not have right now. He is building the same supercomputer at the same time space travel is also a very important thing Well, you know this right, you don't know this very well, sometimes people misunderstand. What is gravity? Gravity is not a magnet of earth which is pulling us. It is a long time ago.

Although it has been decored, people still feel that there is a big magnet inside the earth within which we We are pulling, that is gravity, no, that is not gravity, do you know what is gravity? If you drop a simple cloth here and now if you put a big planet like Earth on top of it, then what will happen to that cloth, it will sink down, now after it sinks, now if you throw anything on that cloth, it will fall inside the earth, this is gravity, what is the cloth right now, what is the structure of space and time, there is meaning in the structure of space and time, this is why That cloth is Jaya, the structure of space and time is the earth, the structure of space and time is

Because of this the cloth has become dry. And now everything will fall on the earth Everyone's gravity works the same way they bend space and time Nothing exists in this world without information. can't pass What does it mean when you press the TV remote? So photons carry information quickly And tell the TV to turn off ok that information is passed If you are calling someone, you are talking on the radio, then that is also the radio frequency which carries the distortion and you say to the TV that it turns off and that information is passed. If you are calling someone, you are talking on the radio, then that is also the radio frequency which carries your message.

She is going and she is going very fast, faster than you can even think, you talk very easily and smoothly. You are able to do this and the message is reaching them and they are also sending the message back to you and all the work that is done, without the passing of the reformation, nothing can happen in the world, the world will become totally null and void, then how can gravity be such that it is flowing without information, someone is running its information, and we came to know about this a long time ago, when we did electrons etc. Discovery was made and then our electricity was created, the electricity we have today, how would we have known if we had not known about electrons, and if you had told someone 500-700 years ago, 1000 years ago, a thousand years ago, that brother, look, it is because of these electrons that electricity is produced, then you would have felt the same way as everyone feels about Lon Musk, so the thing is that many scientists have this It is believed that there are gravitons in this world which carry the message of gravity and carry the message of earth's gravity to other objects that why come to me because I am down on the trampoline, you come to me, this is the rule, this is the code, the code of the one who has made it, who is the creator, who has simulated it. I, now many scientists believe that, we have created electrons, radio frequency, radio waves. Searched, but we have not found gravitons till date, and we have not understood what is the process of gravitons, we have not been able to study gravity yet, we have studied other things very well, and Elon Musk also believes that no one has been able to know about gravity for the last many years and to know it, such power is actually required.

Which has the strongest computing power in our world. And that is what he is creating in space But what will this do? This would mean that if by any chance we got close to gravitons So the way we use radio frequency today To pass on your information or use photons or do a lot of things We can use gravitons to pass the formation or we can use photons or we can do many things with electrons. We can use gravitons to any body.

To make you massless because it is the gravitons that are carrying the information and not the gravity. Yes, if gravity gravitons come under our control or we get our work done then the matter is not over, we will find some methodology and that body will become massless, it is not that we will be destroyed, it will become massless, gravity will not affect us, we will start floating, which means that we can revolve around the entire universe, Within two-three days, because why do we need only by-fuel, gravity is there, don't run, go wherever you are.

Top Flutter App Development Company

Flutter is developed in 2017 by google as Alphabet Inc. flutter provides single codebase structure that can be used for development of website applications, mobile applications as well as android and ios also. In previous we have to use java + xml for android, and swift for ios but now it solves the problems as cross platform services. For startups companies they should choose flutter over native development. Flutter is a ui framework which uses dart. Its dependency manager is pub.dev where developer can find related packages as they required as payment gateway, state management, sliders, sms, etc.

Widgets:- in flutter, everything is as widget as child widget, stateless widget, stateful widget.

Editor:- we can use flutter in editor like vscode, android studio, etc.

Run:- we can run flutter in any devices like, android smartphone or ios smartphone, browser like chrome, edge, or any emulator if installed.

Apk:- we can build apk as to run on any smartphone.

Startups:- best for any business who want to build their application like ecommerce, education lms, water purification booking app, wifi booking app, matrimonial, bike taxi booking application, online food ordering application, etc.

Live:- for making application live in play store business have to purchase play console account of about 2000/- INR. For ios it will cost around 10000/- INR for lifetime purchase.

Cross platform:- we can build application for any devices like desktop app, android app, ios app.

Ai:- there are many ai platforms that they can help in design and development of building any kind of application as ChatGPT, DeepSeek, Claude, Grok, etc, now many of ai portal are providing full stack applications for web, app, etc. In Ai you can complex problems in second in meantime as the developer know how to do by reading the docs for steps properly. Some of ai website have limitations like long length characters query. You can build designs from scratch in any time as needed. For best resolution you can go for premium subscription to get relevant answers. The ChatGPT, Grok, Claude, DeepSeek is a generative technology means based on data which is on their servers or third party servers like websites their prompt will goes to search engine o find technical term for that then find all relevant sources then check and provides us. The all ai websites are using google search api from google cloud platform as GCP. In recent news google search api limited the data showing results in pagination to increase revenue because the ai website are dependent to get realtime data from internet and google has due to search console they list billion plus websites till now. For AI websites they need large amount of servers so they can choose AWS or datacenters for maintaining load balancers, backups, kafka, zookeeper, etc.

Ecommerce:- we can create shopping mobile applications in any vertical like B2B, B2C, D2C, etc. having features like home page, search, categories, products, add2cart, checkout, payment, order tracking, privacy policy, terms and conditions, shipping policy, delivery policy, returns and refund policy, login, register, etc.

Matrimonial:- we can build matrimonial application like shaadi.com, bharatmatrimony.com having features like login, signup using otp verification, profile creations, subscriptions, profile matches founder, payments using gateway, contact number and chats accessibility after purchasing subscriptions, privacy page, return and refunds page, etc.

Online Taxi Booking:- we can build online bike taxi mobile applications like rapido, ola, uber, having features as register, signin using otp verification, profile details, ride booking with start to destination point, after then check nearest rider on that location after accepting by the caption rides starts by using google maps api when reaches at location then captain has to complete the ride and collect payment via upi or cash as provided by the platform. User can see all rides whatever he has travelled , payments, etc. Captain can see all rides which he provides to customers and payouts what was transferred on particular date. Can check insurance as provided by company, bank account details, etc.

E-Learning LMS:- EdTech business can create LMS applications like Physics Wallah, Allen, Careerwill, selection way, etc having features like login and sign using mobile number, then show all available courses, users or learners can buy their relevant course by enrolling. After enrolling you can see purchased courses after clicked the course content will visible like video player having notes, QNA, relevant queries, assignments, etc. creating ticket features is also for users if they faced any issue regarding this.

Recruitment App:- Any entity who want to provides recruitment services like indeed, apna, workindia, etc. users can signup the app and then provide basic details like name, email, contact, updated CV, etc. based on interests or job profile category, users can apply with variations of benefits as provided by companies like salary range, cab facility, hybrid, travel allowance, cl, gh, etc. users can get notified in new jobs openings.

There are many categories for developing a mobile application using flutter. You can use backend like Laravel, or any other and for database you can use mysql or firebase, for pushing notification you can use firebase push notifications. In play console you can check how much app downloads counts as done.

Webgridsolution:- Global Tech provider webgridsolution provides multiples services like as website design, website development, mobile application development, saas platform, digital marketing, etc.

Webtrills:- India’s leading mobile application development services provider based in delhi having native, hybrid applications development.

Webkul:- webkul is online tech provider in services like development, marketing, etc. its main office is in Noida region.

Appsinvo:- leading full stack mobile applications company across the country with native, iot enabled applications.

Winklix:- Winklix offers mobile app development services for various platforms, including iOS, Android, React Native, Flutter, and Salesforce.

For any enquiries,

Contact support info@bindaasboldilse.com

which AI is best and we should use ?

Which AI is Best? A Practical Guide Based on Real Use Cases

Artificial Intelligence (AI) has become a part of everyday work—whether you're creating content, coding, designing, or automating business processes. But one common question people ask is: which AI is actually the best? The fair reply as is—it depends on what you need it for.

In this guide, I’ll break things down in simple, natural language so you can clearly understand which AI tools are best in different areas and how to choose the right one for your needs.

Understanding “Best AI” – It’s Not One-Size-Fits-All

Before comparing tools, it’s important to understand that no single AI is perfect for everything. Some AI tools are strong in writing, others in coding, some in image creation, and others in automation.

So instead of asking or telling “Which AI is best among all of these?”, a better question is:

“Which AI is best for my specific task?”

Best AI for Content Writing

If your goal is to create blog posts, SEO content, social media captions, or marketing copy, then conversational AI tools are the best fit.

Top Choices:

Why They’re Good:

These tools understand tone, context, and structure. They can generate SEO-friendly content that feels natural—not robotic.

Best For:

Best AI for Coding and Development

If you're a developer or running a tech business, AI coding assistants can save a lot of time.

Top Choices:

Why They’re Good:

They can generate code, fix errors, explain logic, and even suggest better approaches.

Best For:

Best AI for Image Generation

Need designs, banners, social media creatives, or marketing visuals? AI image generators are the best option.

Top Choices:

Why They’re Good:

These tools can create high-quality images from simple text prompts. Perfect for branding and marketing.

Best For:

Best AI for Business Automation

If you want to automate tasks like customer support, WhatsApp messaging, or workflows, automation-focused AI is ideal.

Top Choices:

Why They’re Good:

They connect apps and automate repetitive tasks, saving time and reducing manual work.

Best For:

Best AI for SEO and Marketing

For digital marketing and ranking on Google, some AI tools are specially designed for SEO optimization.

Top Choices:

Why They’re Good:

This will help us with keyword research, content writing, and deep competitor analysis in all spectrum.

Best For:

Key Factors to Choose the Right AI

When selecting the best AI, keep these points in mind:

1. Purpose

What do you need it for—writing, coding, design, or automation?

2. Ease of Use

Some AI tools are beginner-friendly, while others require technical knowledge.

3. Pricing

Free tools are good to start, but premium tools offer better features.

4. Output Quality

Always test the results. The best AI should give accurate and human-like output.

Final Thoughts: Which AI Should You Choose?

As our suggestion is no single “best AI” for everything. The right choice depends on your goals.

The smartest approach is to combine multiple AI tools depending on your needs.

Pro Tip for Businesses

If you're running a business (especially digital services like websites, WhatsApp API, or marketing), using AI smartly can give you a huge competitive advantage.

You can:

Conclusion

AI is not about replacing humans—it’s about making work faster, smarter, and more efficient. The “best AI” is the one that solves your problem effectively.

Start with one tool, experiment, and gradually build your AI toolkit. That’s the real way to get the most out of artificial intelligence.